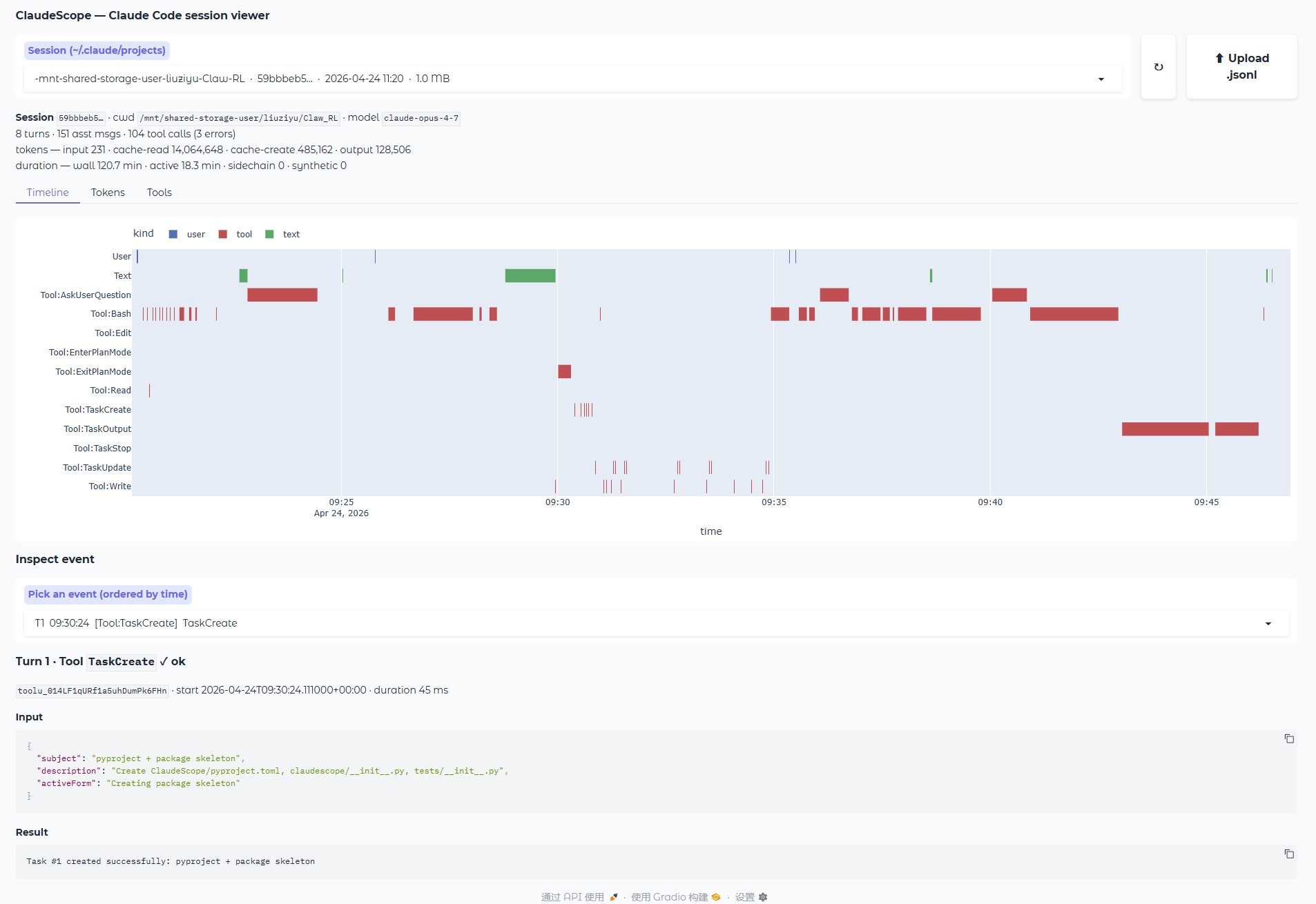

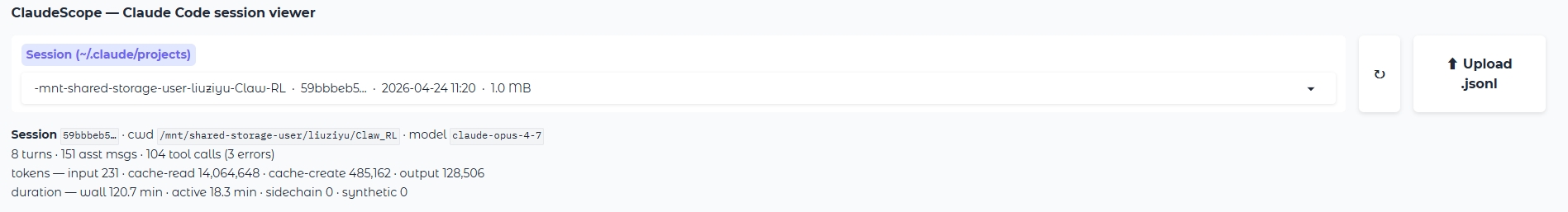

Every time you use Claude Code, a full trace of the session —

every user prompt, every assistant reply, every

thinking block, every tool call (Bash, Edit, Read,

TaskCreate, Agent, …) along with exact timestamps and

token usage — is recorded as a JSONL file under

~/.claude/projects/.

ClaudeScope turns that raw

log into an interactive Gradio dashboard, so you can literally

watch what the agent did — when it was reasoning, when

it was waiting on a slow Bash command, where tokens were being

spent, and exactly what failed. It is built for researchers

who study agent behaviour (failure modes, action patterns,

cost) and for developers who simply want to understand where

their Claude Code session time went.

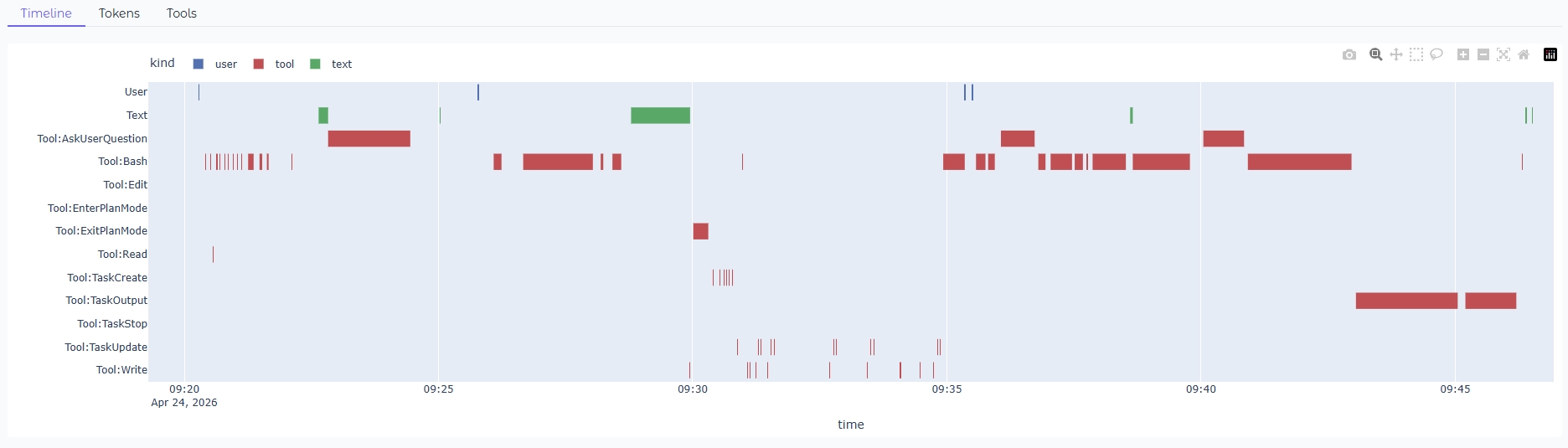

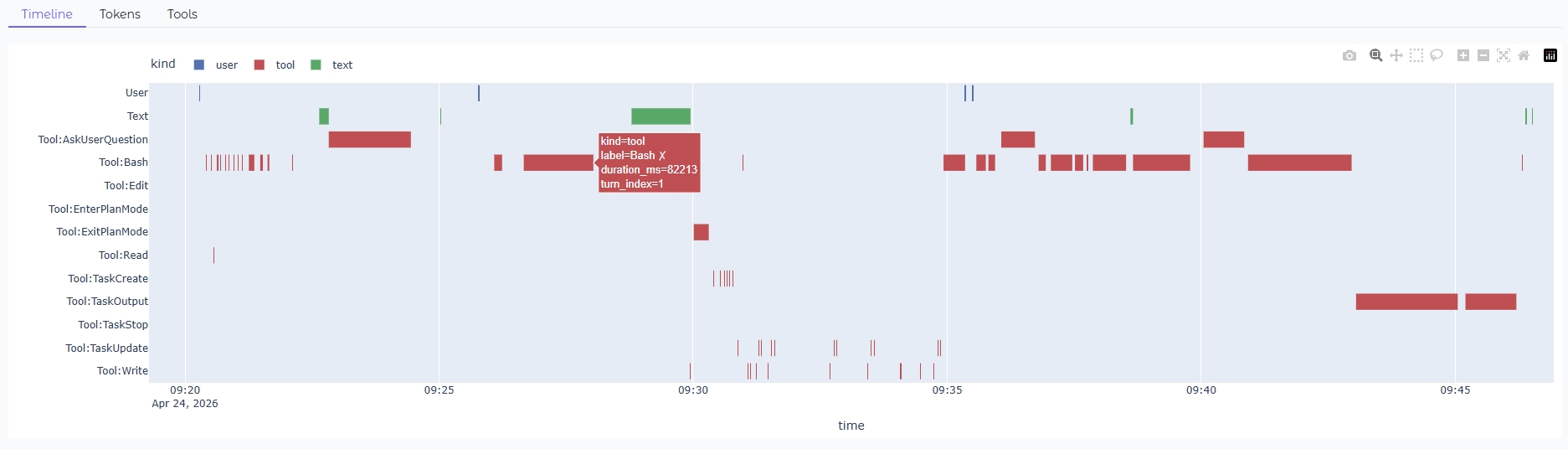

Timeline Gantt

Every user / text / thinking / tool block on parallel lanes across real time.

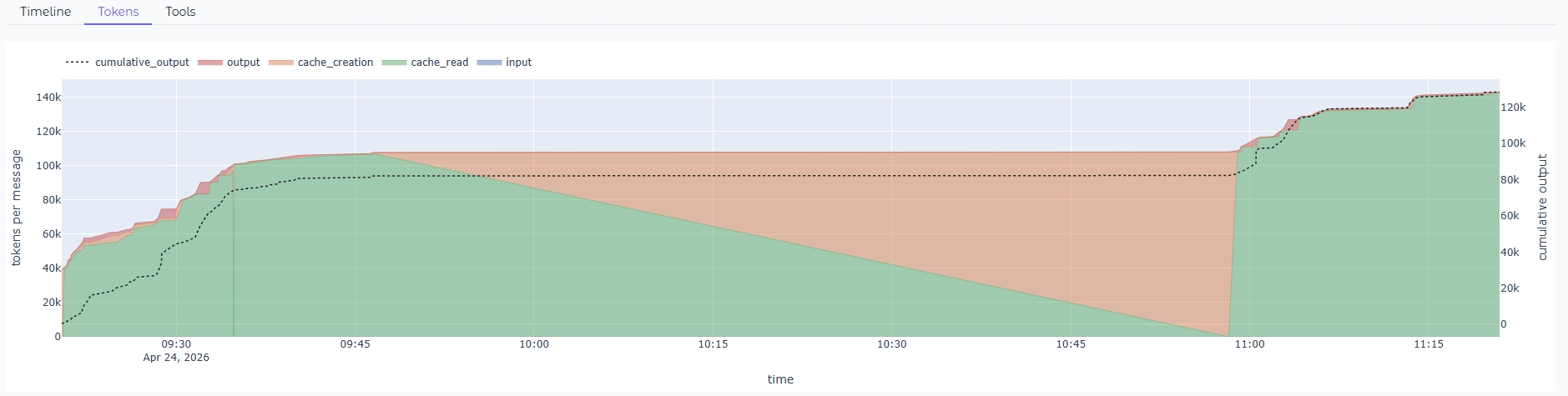

Token Stream

Per-message stacked tokens with cumulative output, so you can see cost grow.

Tool Stats

Per-tool call counts, wall-time, and failure rates — find the tool eating your session.

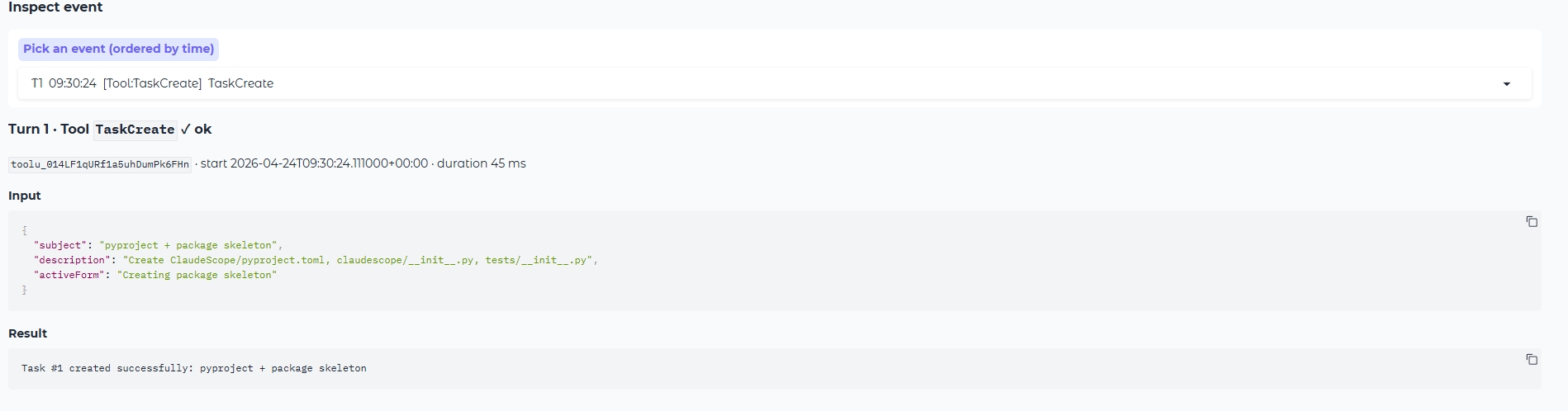

Event Inspector

Drill into any single step — inputs, outputs, thinking, success or error.